Programmatic SEO is a very complicated process and there are certain practices that are straight-up bad (it might be fit for regular SEO though).

In this post, I have collected a list of bad practices that you must know before jumping into doing programmatic SEO for your site.

Let’s take a look…

If you want more information, you can read our 4000-word guide on programmatic SEO.

Programmatic SEO bad practices

I have selected some common bad practices that you must avoid:

1. Selecting a keyword without checking the SERP

While selecting the head terms, manually check SERP for all the head keywords you select to correctly understand the search intent. Yes, there are tools like SEMrush that show the search intent of the keywords, but it’s reliable and doesn’t take into account the fact you would be adding modifiers to these head keywords.

I recommend googling the keywords and checking the other ranking results. If the SERP is not showing what you’re trying to rank for, either modify the content to match or it’s not a good idea to select the keyword. And even if you rank for the term, the CTR (click-through rate) will be very low.

Unfortunately, you can’t automate this process. There isn’t a tool that can automatically and correctly predict the searcher’s intent for you.

2. Not interlinking posts with each other

Adding internal links to your blog posts is as much as important as creating backlinks, if not more.

While setting up the programmatic SEO process, most people don’t consider creating a system that will automatically interlink posts with each other. And that’s where they mess up.

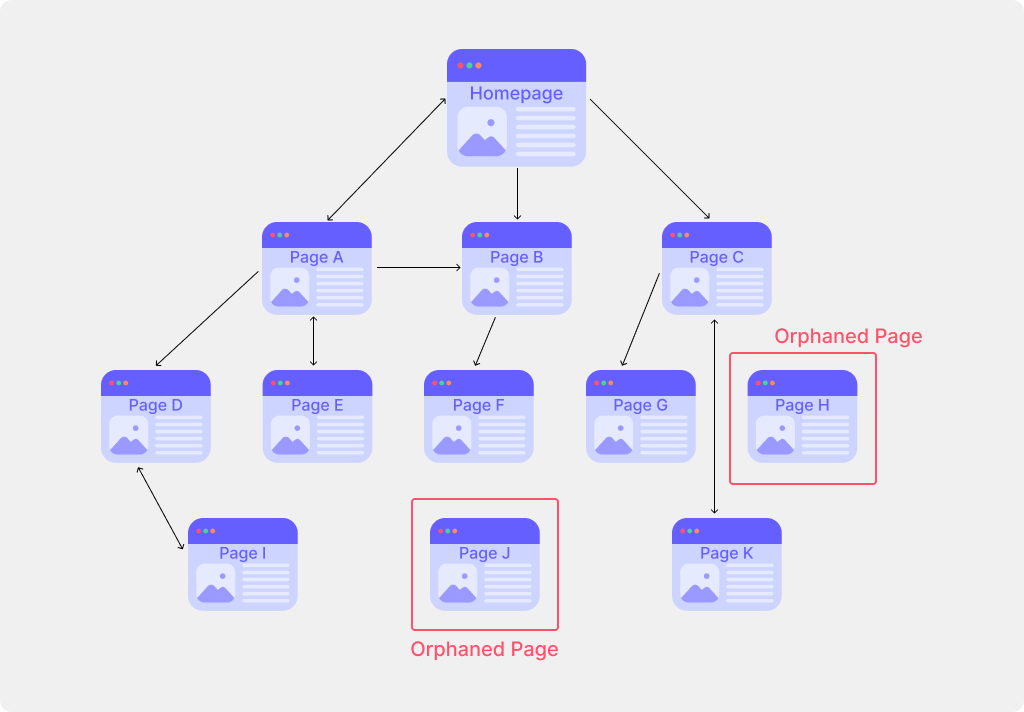

Pages that have no links are called orphaned pages, and search engines don’t take them seriously. It’s like if you don’t care about those pages by not linking them from other pages, why should the search engines care.

I recommend adding a “related” or “similar” or “also see” section at the bottom of the page, but you use other techniques as well.

3. Relying too much on SEO tools

If you’re active in the SEO communities and groups, you must have heard this advice about not relying too much on SEO tools. I have said multiple times as well that the keyword or monthly visitors data is not at all accurate, they are just an estimation.

You discovered a gold mine then!

— DeepakNess (@DeepakNesss) January 15, 2022

I think, monthly searches shown by all the SEO tools are underestimated.

I posted about this in a Facebook group a year ago 👇 (old screenshot) pic.twitter.com/8PKMwfz9wm

Instead, talk to your users and explore forums where they hang out and discuss things, and you will find some keywords that the tools won’t ever show.

In the guide to finding head terms for programmatic SEO, I have explained how you can brainstorm your own products and services to find out interesting keywords. There are also some other methods listed that don’t need any SEO tools either.

4. Not spending enough time preparing the page template

When generating pages programmatically, the template determines how the generated pages will look to the users and to the search engines.

To avoid any mistakes, you must spend enough time perfecting the template for the looks as well as optimizing it for the search engines, or should I just say Google? 😉

Also, make sure that the generated pages will have enough content on them and that they don’t get categorized as thin or low-quality content.

5. Not having enough server resources

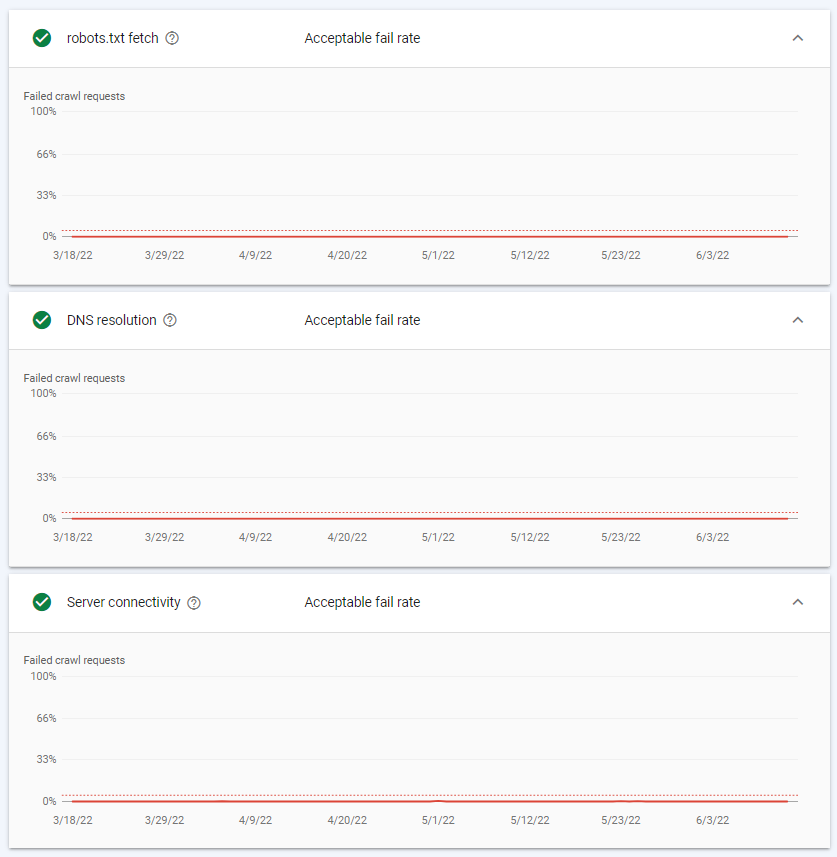

As there are tons of pages on a programmatic SEO website, you need to have enough free server resources so that the search engine bots can crawl the pages without hitting the limit.

Because if the server gets overloaded, it simply stops responding to users’ and bots’ requests. And that ultimately results in delayed crawling (and indexing) or no crawling at all.

Having a misconfigured server with any kinds of errors results in the same problem.

I recommend keeping an eye on the Host status under the Google crawl stats report, especially the robots.txt fetch, DNS resolution, and Server connectivity sections. Make sure that the Failed crawl requests are at 0% for all three, as you see in the above screenshot.

There are similar functionalities available in the Bing Webmaster Tools as well.

6. Not taking Google Search Console errors and warnings seriously

When doing programmatic SEO, always keep Google Search Console notifications in check. Consider GSC as your best friend.

Google Search Console points out any errors or misconfigurations that it detects on the website. It often notifies you about server errors, hacked sites, sudden traffic hikes, user-friendliness of the pages, and other best practices.

For example, a while ago I received a warning titled “Submitted URL not found (404)” inside GSC and found out that this issue was being caused by a page that I deleted earlier. I fixed the issue, started the validation process, and it got resolved.

7. Not having images on the pages

While images are not direct ranking signals, they definitely help the pages look good and improve user experience. And if the users are loving your page, then most probably search engines would love it too.

When setting up the programmatic SEO process, you should also program a system that adds optimized images on the pages. Of course, you will need to create the page template accordingly.

8. Creating garbage backlinks

Creating garbage or low-quality backlinks is not just bad in the programmatic SEO, but you should avoid creating these for any kind of SEO. They do more harm than any good.

But… backlinks are important, and I recommend reaching out to other quality blogs in your niche for guest posting. It’s the best way to get a backlink in exchange for a well-written article.

9. Creating 1000s of pages at once

This might be controversial and different SEO might have different opinions about handling 1000s of pages.

But… according to me, drip-creating the pages is the best idea. For example, if you have to publish 5000 pages then first, publish just 10-20 pages, wait for the Google Search Console data, and check and fix any kinds of errors. And if everything seems alright, you can start publishing 50-100 pages every week.

However, publishing all the pages at once doesn’t generally cause any issues but sometimes, the indexing process takes longer as compared to drip-publishing pages.

10. Keeping duplicate text snippets on the pages

While creating the page template, keep in mind that at least 80% of the page content is going to be replaced by the unique content from that spreadsheet.

It’s really important to control the use of text snippets that will be present on all the programmatically generated pages. For that, you need to put extra attention while creating the page template.

Final words

Before you get all mixed up in these suggestions and tips, just remember that you’re doing programmatic SEO to create pages that bring highly targeted users to your site. Keep the user experience at first, and you will be all good to go.

And that’s it.

If you have any related queries, feel free to let me know in the comments below.

Leave a Reply