As soon as you hit publish on your programmatic pages, you reach the next level in the programmatic SEO game. Now, you have to get those pages indexed in search engines like Google.

You don’t need to worry much if the generated programmatic pages are under 100, but if you have 100s or even 1000s of pages, it might take forever to index all the pages.

And there’s no magic button for faster indexing of pSEO pages, but there are certain tips-tricks that do help.

Let’s take a look…

Speed up indexing for programmatic pages

Below, I have listed some important tips for accelerating the indexing process for your pSEO pages.

1. Improve internal linking

The pages which are not linked from any other page on your website are called orphaned pages, and search engines like Google hate those pages. Especially if you have created 100s or even 1000s of those pages via programmatic SEO.

You didn’t care enough to add internal links to those pages and left them aside; then why should Google care?

Adding proper internal links to and from your programmatically generated pages helps Google discover and index the new pages faster. Aim to add at least 2 internal links on all your pages.

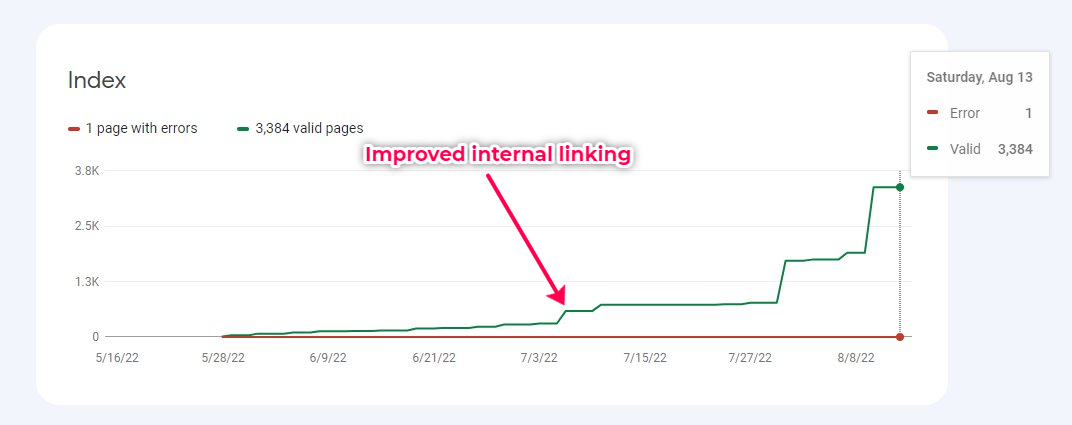

On a programmatic site, where internal linking wasn’t at all done, I just improved the internal linking, and you can see the spike in the number of indexed pages soon after.

2. Get backlinks to the site

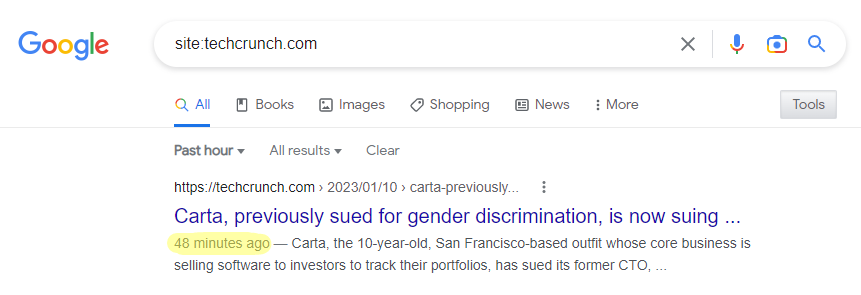

The more backlinks your site has, the faster newly generated pages will be crawled and indexed in Google. For example, TechCrunch has more than 700k pages indexed in Google, and the new posts get indexed within minutes as you can see in the screenshot below:

I won’t say backlinks are the only factor affecting the indexing speed of your website, but they are one of the major factors. And not only with faster indexing, but backlinks also help you with ranking; so that’s like an added bonus.

3. Submit your sitemap to search engines

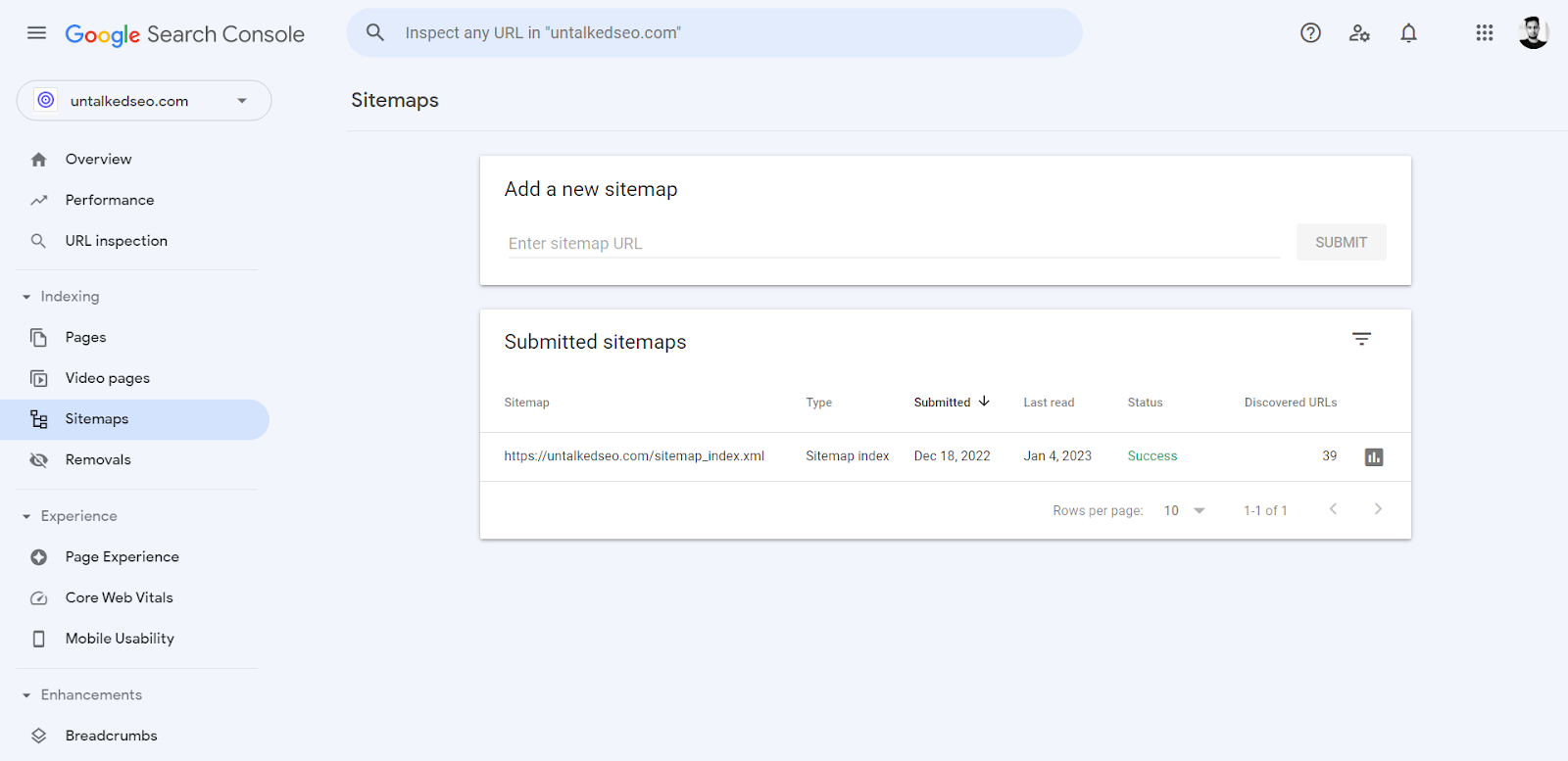

Google has Search Console and Bing has Webmaster Tools where you can submit your XML sitemap. It helps search engines understand your content and discover new pages easily.

If you’re creating only a few pages on your website then sitemaps are not that important, but if you are dealing with 1000s of pages, having a sitemap becomes crucial.

On WordPress, SEO plugins like Yoast and RankMath automatically handle sitemaps for you. But if you have a custom-built site, you may need to create it manually.

As per Google’s recommendations, your sitemap should not contain more than 50,000 URLs and should not be more than 50 MB in size. And if your site has more than 50,000 URLs, you have the option of creating multiple sitemaps as well.

You can also use Google’s sitemap ping tool whenever you publish a new set of pages by simply going to this URL https://www.google.com/ping?sitemap=YOUR_SITEMAP as shown in the above tweet.

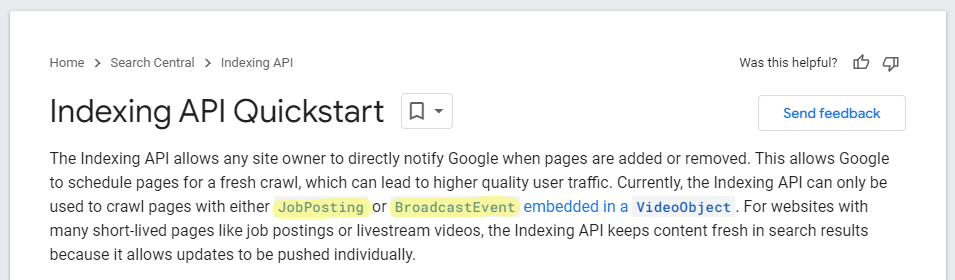

4. Use Google’s indexing API

Even though Google has clearly mentioned that its indexing API is only for job posting and broadcast event type content, several people have confirmed that it’s working for other kinds of pages as well.

If you are worried about using the API that it might hurt your website, worry not; because it won’t hurt, as Google has confirmed. But it may not help as well.

On WordPress, you can use RankMath’s special plugin to set up Google indexing API. In this blog post, RankMath has explained everything in detail.

5. Use Cloudflare’s crawler hints

If you’re trying to index your site faster in search engines like Bing and Yandex, then turning on the Crawler Hints option in Cloudflare might be helpful.

I have this turned on for my sites, but there’s no way to measure if it’s accelerating the indexing speed. So, I am not very sure about this; but it’s said to be so good that even Google has said that they will be testing this in their platform.

And obviously, you should be using Cloudflare for this. However, if you don’t use Cloudflare. Ignore it, you don’t need to worry about it so much.

6. Check your robots.txt file

Make sure that any important pages or areas on your website are not disallowed to be crawled and indexed by search engine bots. No important page or area should be written Disallow: /page/ like this.

A regular robots.txt file should look something like the image above.

And if you’re worried about whether a specific page/area on your website is indexable or not, you can either use Google’s robots.txt tester or this simple tool by Merkle.

Final words

Did you notice that I left a critical factor to get indexed in Google and other search engines?

Yes, the quality of your programmatically generated pages. And I assumed that you are already creating high-quality content through programmatic SEO, and if you’re doing that, you’re good to go.

If you have any related queries, feel free to let me know in the comments below.

Leave a Reply